Let us assume that we have to solve the following problem:

"Find the number of times each URL is accessed by parsing a log file containing URL and date stamp pairs"

Solution 1

This is easy. Traverse the log file, one line at time. Record the count for every unique URL that is encountered. This is easily accomplished in a Java program using a for loop and a hashmap.

Solution 1 works great if the input file is small. What if you are dealing with a large volume of data? Tera, giga, peta etc. The sequential processing is not a practical option for dealing with Big Data.

Solution 2

In this solution, the input is split into multiple <key, value> pairs and fed to a map function in parallel. The map function then converts it into intermediate <key, value> pairs. In our example, the map function will output <url, 1> pair for for every input <key, value>. Where the url is the url identified from the input and 1 is for each unique occurrence.

Before Map Step:

Key = 1 Value = http://hpccsystems.com

Key = 2 Value = http://hpccsystems.com

Key = 3 Value = http://hpccsystems.com/developers

Key = 4 Value = http://hpccsystems.com/downloads

After Map Step:

Key = http://hpccsystems.com Value = 1

Key = http://hpccsystems.com Value = 1

Key = http://hpccsystems.com/developers Value = 1

Key = http://hpccsystems.com/downloads Value = 1

The data is then sorted by the intermediate keys (<url1, 1>, <url2,1> etc) so that all occurrences of the same key are grouped together. Every unique key identified and all the values is then passed to a reduce function. The reduce function can be called multiple times for the same key. In our example, the reduce function will simply count the occurrences for the unique url in the reduce step - <url, total count>

After Reduce Step:

Key = http://hpccsystems.com Value = 2

Key = http://hpccsystems.com/developers Value = 1

Key = http://hpccsystems.com/downloads Value = 1

This process of solving the problem by using the Map and Reduce steps is called MapReduce. The MapReduce paradigm was made famous by Google. Google used to process large volumes of crawled data, by spreading the map and reduce jobs to several worker nodes in a cluster to execute it in parallel to achieve high throughput. Another well known MapReduce framework is Hadoop.

The major drawback of the MapReduce paradigm is the fact that it is intended to process batch oriented jobs. It is suitable for ETL (Extraction, Transformation and Loading) but not for online query processing. So Hadoop and its extensions Hive and HBase, have been built on top of a batch oriented framework that is not really meant for online query processing. The other drawback is the fact that every task needs to be defined in terms of a Map and Reduce step so that work can be distributed across nodes. In most cases it will take several Map and Reduce steps to solve a single problem.

Solution 3

SELECT url, count(*) FROM urllog GROUP BY url

How easy is that? No map or reduce logic. SQL, with it declarative nature, lets us concentrate on the What logic. However, SQL databases do not adhere themselves to BigData processing. Typical BigData processing involve several clustered nodes processing information to produce an end result.

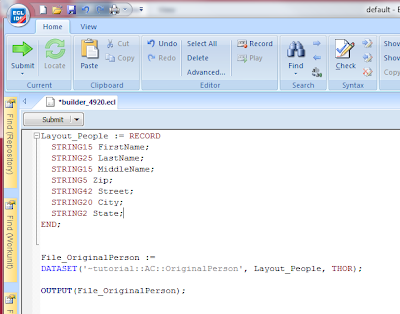

HPCC Systems ECL, is specifically designed to overcome the limitations of SQL. ECL is a truly declarative language, that is somewhat similar to SQL, and lets you solve the problem by expressing the code as the What rather than the How. The complexity around clustering is well encapsulated by the ECL language and hence is never really exposed to the programmer.

Simple ECL Code to find the count for each unique URL:

STRING50 url;

STRING50 date;

END;

//Declare the source of the data

urllog := DATASET('~tutorial::AC::Urllog',rec,CSV);

//Declare the record structure to hold the aggregates for each unique URL

grouprec := record

urllog.url;

groupCount := COUNT(GROUP);

end;

//The TABLE function is equivalent to an SQL SELECT command

//The following declaration is used to create a Cross Tab aggregate of the SELECT equivalent shown above

RepTable := TABLE(urllog,grouprec,url);

//Output the new record set

OUTPUT(RepTable);

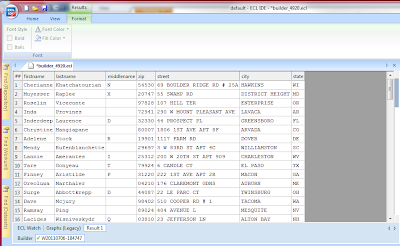

Sample Input:

The HPCC Platform will distribute the work across the nodes based on the most optimal path that is determined at runtime.

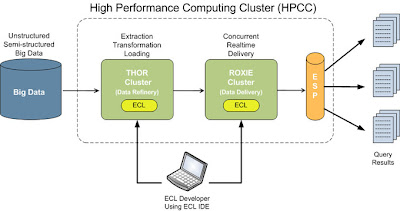

As compared to MapReduce frameworks like Hadoop, the HPCC Platform keeps it simple. Let the platform determine the best work distribution across nodes so that developer solves the What rather than worry about How the work is distributed across nodes. Further, the HPCC Platform comprises of two unique components that are optimized to solve specific problems. The Thor is used as an ETL (Extraction, Transformation, Loading and Linking) engine and the Roxie is used as the online query processing engine.

"Find the number of times each URL is accessed by parsing a log file containing URL and date stamp pairs"

Solution 1

This is easy. Traverse the log file, one line at time. Record the count for every unique URL that is encountered. This is easily accomplished in a Java program using a for loop and a hashmap.

Solution 1 works great if the input file is small. What if you are dealing with a large volume of data? Tera, giga, peta etc. The sequential processing is not a practical option for dealing with Big Data.

Solution 2

In this solution, the input is split into multiple <key, value> pairs and fed to a map function in parallel. The map function then converts it into intermediate <key, value> pairs. In our example, the map function will output <url, 1> pair for for every input <key, value>. Where the url is the url identified from the input and 1 is for each unique occurrence.

Before Map Step:

Key = 1 Value = http://hpccsystems.com

Key = 2 Value = http://hpccsystems.com

Key = 3 Value = http://hpccsystems.com/developers

Key = 4 Value = http://hpccsystems.com/downloads

After Map Step:

Key = http://hpccsystems.com Value = 1

Key = http://hpccsystems.com Value = 1

Key = http://hpccsystems.com/developers Value = 1

Key = http://hpccsystems.com/downloads Value = 1

The data is then sorted by the intermediate keys (<url1, 1>, <url2,1> etc) so that all occurrences of the same key are grouped together. Every unique key identified and all the values is then passed to a reduce function. The reduce function can be called multiple times for the same key. In our example, the reduce function will simply count the occurrences for the unique url in the reduce step - <url, total count>

After Reduce Step:

Key = http://hpccsystems.com Value = 2

Key = http://hpccsystems.com/developers Value = 1

Key = http://hpccsystems.com/downloads Value = 1

This process of solving the problem by using the Map and Reduce steps is called MapReduce. The MapReduce paradigm was made famous by Google. Google used to process large volumes of crawled data, by spreading the map and reduce jobs to several worker nodes in a cluster to execute it in parallel to achieve high throughput. Another well known MapReduce framework is Hadoop.

The major drawback of the MapReduce paradigm is the fact that it is intended to process batch oriented jobs. It is suitable for ETL (Extraction, Transformation and Loading) but not for online query processing. So Hadoop and its extensions Hive and HBase, have been built on top of a batch oriented framework that is not really meant for online query processing. The other drawback is the fact that every task needs to be defined in terms of a Map and Reduce step so that work can be distributed across nodes. In most cases it will take several Map and Reduce steps to solve a single problem.

Solution 3

SELECT url, count(*) FROM urllog GROUP BY url

How easy is that? No map or reduce logic. SQL, with it declarative nature, lets us concentrate on the What logic. However, SQL databases do not adhere themselves to BigData processing. Typical BigData processing involve several clustered nodes processing information to produce an end result.

HPCC Systems ECL, is specifically designed to overcome the limitations of SQL. ECL is a truly declarative language, that is somewhat similar to SQL, and lets you solve the problem by expressing the code as the What rather than the How. The complexity around clustering is well encapsulated by the ECL language and hence is never really exposed to the programmer.

Simple ECL Code to find the count for each unique URL:

//Declare the input record structure. Assume an input CSV file of URL,Date fields

rec := RECORDSTRING50 url;

STRING50 date;

END;

//Declare the source of the data

urllog := DATASET('~tutorial::AC::Urllog',rec,CSV);

//Declare the record structure to hold the aggregates for each unique URL

grouprec := record

urllog.url;

groupCount := COUNT(GROUP);

end;

//The TABLE function is equivalent to an SQL SELECT command

//The following declaration is used to create a Cross Tab aggregate of the SELECT equivalent shown above

RepTable := TABLE(urllog,grouprec,url);

//Output the new record set

OUTPUT(RepTable);

Sample Input:

Sample Output:The HPCC Platform will distribute the work across the nodes based on the most optimal path that is determined at runtime.

As compared to MapReduce frameworks like Hadoop, the HPCC Platform keeps it simple. Let the platform determine the best work distribution across nodes so that developer solves the What rather than worry about How the work is distributed across nodes. Further, the HPCC Platform comprises of two unique components that are optimized to solve specific problems. The Thor is used as an ETL (Extraction, Transformation, Loading and Linking) engine and the Roxie is used as the online query processing engine.